Learning about AI isn't enough.

Your team needs to actually use it with clarity, confidence, and a framework that fits your mission.

Workshops built for teams that want to move, not just learn.

Most AI training leaves people informed—but stuck. It explains what AI is, demonstrates a few tools, and then sends everyone back to their desks without a clear next step.

These workshops are different.

This work is designed to be both rigorous and accessible. Participants are not expected to arrive with technical expertise. Complex ideas are made clear, practical, and usable so faculty and leaders can engage with confidence.

People often leave these sessions not only understanding AI more deeply, but feeling supported and equipped to try something new.

This is not about adding more tools. It is about forming people who can think, decide, and lead in a world where AI exists.

Is this for you?

These trainings are designed for institutions and organizations that are ready to move beyond curiosity and into intentional, aligned action.

This work is a strong fit if:

You have interest, but not alignment.

AI conversations are happening across your institution, but they are inconsistent, siloed, or unclear.You have tools, but not shared practice.

Faculty and staff are experimenting, but there is no common language, framework, or expectation guiding that work.You feel pressure to respond, but want to do it well.

You don’t want a reactive or purely technical approach; you want something grounded in your mission and values.You need to build confidence across your community.

Faculty, staff, or leadership need clarity, examples, and space to engage AI without fear or confusion.You are trying to connect AI to teaching, leadership, or formation.

Not just what AI does, but what it changes in how you educate, lead, and develop people.

This is not about adding more to your plate. It is about creating clarity, alignment, and forward momentum across your institution.

Most institutions begin with a workshop, then move into strategy and implementation over time.

What becomes possible?

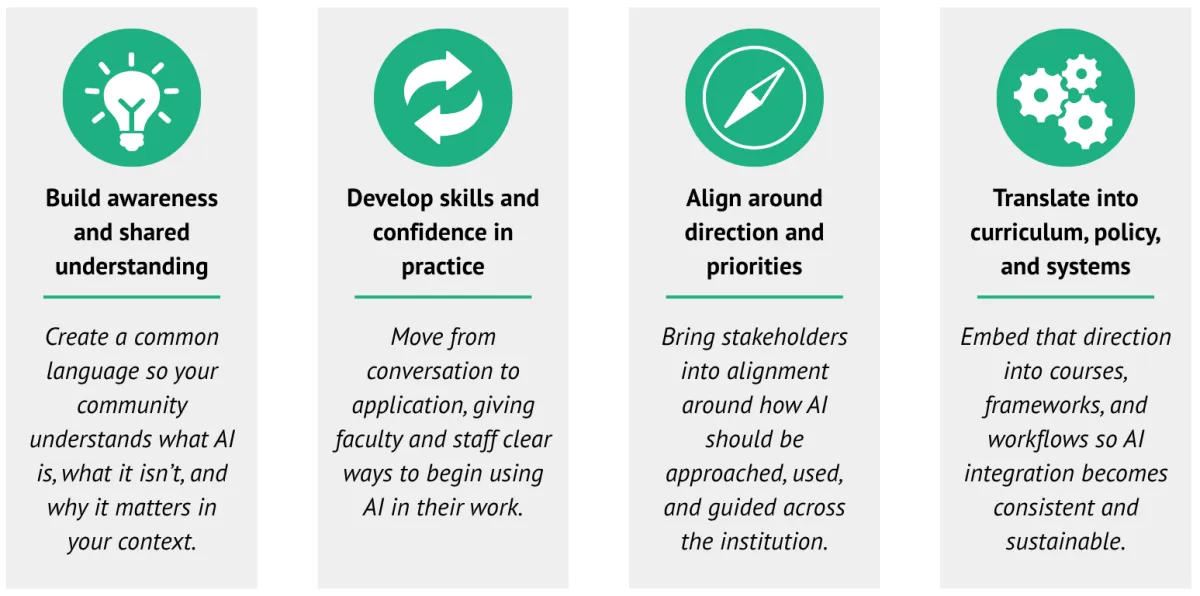

When training is aligned to your institution, not just tools, it does more than introduce AI. It changes how your institution understands, approaches, and integrates AI.

After these engagements, institutions are able to:

Move from uncertainty to shared clarity.

AI is no longer abstract or overwhelming. Your community understands what it is, how it works, and what it means in your specific context.Replace hesitation with confident practice.

Faculty and staff are no longer guessing. They have clear examples, language, and starting points they can immediately apply.Shift from isolated experimentation to an aligned approach.

Instead of individuals working independently, your institution begins to develop a shared direction and common expectations.Design learning that reflects an AI-enabled world.

Courses, assignments, and student experiences begin to evolve intentionally, not reactively.Build momentum that extends beyond the session.

Training becomes a catalyst, not a one-time event, sparking continued progress in strategy, curriculum, and implementation.Anchor AI in what matters most

AI is not adopted for its own sake. It is integrated in ways that deepen your mission, strengthen your people, and clarify what you are forming.

This is where institutions begin to move from reacting to AI to intentionally forming within it.

See how this work takes shape in practice.

Download a one-page overview or a detailed guide to understand how training connects to strategy, implementation, and institutional change.

What does this look like in practice?

This is not a lecture. It is active, applied learning grounded in your institutional mission and current AI approach.

Faculty are:

Discussing real scenarios

Working through tensions and tradeoffs

Asking better, more focused questions

Building shared language across disciplines

This is not passive learning. It is the beginning of alignment.

Faculty leave with clarity, not just information.

A clear understanding of AI risks and opportunities

Shared language across faculty and leadership

Practical next steps aligned to your mission

Confidence to move forward intentionally

Workshop Offerings

These workshops are designed to meet institutions at different stages from early awareness to implementation and system-level thinking. Many institutions choose to begin with a full faculty development day that combines multiple workshops into a single, cohesive experience.

Don't see exactly what you need? Let’s design an engagement that fits your institution’s goals, context, and timeline.

Foundations and Mindset

For institutions building awareness and shared language

AI Foundations

Build a clear, practical understanding of AI beyond headlines and hype. This session gives your institution a shared language and foundation for engaging AI thoughtfully across teaching, leadership, and daily work.

AI & Human Formation in Practice

Translate your mission and values into concrete practices in an AI-enabled world. This workshop helps faculty and leadership define what they are forming, and how that shows up in courses, decisions, and institutional life.

AI Decision-Making & Discernment

Move from “Can we use this?” to “Should we—and why?” This session equips faculty and leadership with a framework for making thoughtful, mission-aligned decisions about AI across contexts.

Teaching and Learning

For institutions ready to move into practice

Faculty AI Curriculum Design

Redesign assignments, assessments, and learning experiences to reflect how students actually work today. Faculty leave with practical changes they can immediately implement in their courses.

AI Academic Integrity in Practice

Move beyond unclear policies to a shared, workable approach to AI use. This workshop helps faculty design for clarity, so expectations are built into learning—not enforced after the fact.

Conversations About AI with Students

Equip faculty with the language and confidence to talk with students about AI use. The focus is on building clarity, trust, and shared understanding in the classroom.

Tools and Build

For institutions ready to build and scale

BoodleBox Bootcamp

Learn to work across AI models and build connected workflows. This hands-on experience moves participants from using AI tools to building systems that support teaching and institutional work.

Build Your Own Bot Workshop

Design and create simple, custom AI tools aligned to your own workflows. Participants leave with a working bot and the skills to build more over time.

Leadership and Strategy

For provosts, deans, and leadership teams

Preparing for an AI-Native World

Explore how AI-native students are changing expectations around learning and leadership. This session helps leaders think long-term and define a more intentional institutional direction.

Retreat Facilitation

Structured, decision-focused sessions that help leadership teams align around AI strategy and next steps. Designed to move conversations forward into clear, actionable direction.

Workshops can stand alone or be combined into a multi-session engagement based on your institution’s goals and stage of adoption.

Engagement Formats

Training can be delivered in a variety of formats, depending on your institution’s goals, timeline, and desired level of depth. These are not simply variations in time; they are designed to support different stages of institutional progress.

Workshop (2 hours)

A focused, high-impact session designed to introduce key ideas, build awareness, or address a specific need.

This format is ideal for launching conversations, creating shared language, and helping participants begin engaging AI with greater clarity.

Best for: faculty meetings, targeted sessions, or initial exposure

Half-Day Training

A more interactive experience that moves beyond understanding into application.

Participants engage in guided discussion, real scenarios, and hands-on work—allowing them to begin applying ideas in their own context.

Best for: faculty development sessions, departmental work, or focused implementation.

Full-Day Training

A fully customized, high-impact day designed to move your institution forward—not just introduce ideas.

urse-level work.

This is not a series of sessions—it is a designed experience that builds clarity, momentum, and direction.

Best for: institution-wide initiatives, alignment efforts, or key transition moments

Custom Training Series

A multi-session engagement designed to build momentum over time.

Sessions are intentionally sequenced to support learning, application, and implementation—allowing institutions to move from awareness to sustained, integrated practice.

Best for: institutions seeking long-term change rather than one-time exposure

Each format can stand alone or be combined into a larger engagement aligned to your institution’s goals, context, and what it is ready to build next.

This work comes from experience, not observation.

As Director of the School of Communication and Faculty Fellow for AI at Lipscomb University, Sarah Gibson led the grassroots effort that helped make it one of the first independent institutions to provide AI tools universally to faculty, staff, and students.

She brings 17 years in higher education, a First Amendment scholar’s instinct for what’s at stake, and a storyteller’s awareness of what gets lost when institutions move too quickly without a clear human vision.

This isn’t borrowed expertise. It is lived experience shaped in institutional work and translated into practice to move institutions forward.

How training fits into institutional change.

Most institutions don’t start with strategy. They start with a workshop.

Training is often the first step in a larger progression:

Most institutions begin with a workshop, then move into strategy and implementation over time.

These sessions are designed to build clarity, confidence, and momentum while aligning faculty, staff, and leadership around a shared approach to AI. Whether used as an entry point or as part of a larger engagement, each training is grounded in your institution’s mission, context, and goals.

In these workshops, faculty and leadership step back from the noise and engage the real questions:

What are we protecting?

What are we building?

What are we becoming?

Whether your team is just beginning or already experimenting and ready to go further, these sessions provide a shared foundation, practical skills, and the language to move forward together.

This is where institutions begin to move from reacting to AI to intentionally forming within it.

What comes next?

Moving from workshops to strategy.

For many institutions, training is the starting point.

Once your team has a shared foundation, the work often deepens moving from awareness and initial practice into strategy, structure, and sustained implementation.

From there, institutions typically expand into:

For many institutions, training is the starting point.

Once your team has a shared foundation, the work often deepens moving from awareness and initial practice into strategy, structure, and sustained implementation.

From there, institutions typically expand into:

AI strategy and positioning

Human formation frameworks

Policy and governance

Curriculum integration

AI ecosystems and systems design

This is where your mission meets AI.

This is where clarity begins.

Ready to get your team moving?

All engagements begin with a complimentary discovery conversation.